Скачать с ютуб Hugging Face Text Generation Inference (TGI): Deploy and Serve Your LLM Model Efficiently в хорошем качестве

Из-за периодической блокировки нашего сайта РКН сервисами, просим воспользоваться резервным адресом:

Загрузить через dTub.ru Загрузить через ClipSaver.ruСкачать бесплатно Hugging Face Text Generation Inference (TGI): Deploy and Serve Your LLM Model Efficiently в качестве 4к (2к / 1080p)

У нас вы можете посмотреть бесплатно Hugging Face Text Generation Inference (TGI): Deploy and Serve Your LLM Model Efficiently или скачать в максимальном доступном качестве, которое было загружено на ютуб. Для скачивания выберите вариант из формы ниже:

Загрузить музыку / рингтон Hugging Face Text Generation Inference (TGI): Deploy and Serve Your LLM Model Efficiently в формате MP3:

Роботам не доступно скачивание файлов. Если вы считаете что это ошибочное сообщение - попробуйте зайти на сайт через браузер google chrome или mozilla firefox. Если сообщение не исчезает - напишите о проблеме в обратную связь. Спасибо.

Если кнопки скачивания не

загрузились

НАЖМИТЕ ЗДЕСЬ или обновите страницу

Если возникают проблемы со скачиванием, пожалуйста напишите в поддержку по адресу внизу

страницы.

Спасибо за использование сервиса savevideohd.ru

Hugging Face Text Generation Inference (TGI): Deploy and Serve Your LLM Model Efficiently

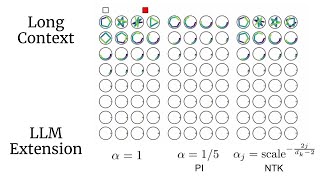

Welcome to my latest video where we review Huggingface Text Generation Inference (TGI)! You'll discover: What TGI is and why it's a game-changer for LLM deployment Name of the 4 ways to deploy your Hugging Face model A side-by-side comparison of local vs. Docker deployment performance Step-by-step code implementation for both transformer and TGI shell scripts Checking Docker logs for troubleshooting Leveraging Swagger API for seamless integration If your system does not have GPU then please consider below section. https://huggingface.co/docs/text-gene... Scripts used in the video are available at: https://gitlab.com/Nayan.1989/youtube... For more details on Huggingface TGI refer: https://huggingface.co/docs/text-gene... #huggingface #textgeneration #llm #deployment #docker #swagger #machinelearning #TGI #coding #tutorial